Mouser: Edge AI Powers Real-Time Industrial Automation

March 9, 2026

How Edge AI Has Become a “Must-Have” Requirement for Industrial Systems

Industrial systems create large volumes of data as they implement predictive maintenance and automation. Global data generation reached 181 zettabytes (ZB) in 2025, with estimates predicting 221ZB in 2026. Moving all that data to the cloud can overwhelm networks and slow response times.

The latency of round-trip data to the cloud can range from tens to hundreds of milliseconds in traditional enterprise networks, depending on network path and distance. That speed is too slow for the real-time control and safety functions of industrial systems. Processing every event in the cloud also increases communication costs and introduces the risk of system outages when connectivity is poor.

Edge artificial intelligence (AI) addresses some of these limitations by running machine learning (ML) models right on the hardware itself. This approach allows systems to make decisions immediately without waiting for a remote server. This Mouser New Tech Tuesday looks at how local processing is being used more in factory automation and machine vision systems, where immediate responses to sensor and image data are a requirement.

Beyond Proof of Concept

In its early stages, edge AI was the sort of technology you’d see only in controlled pilots or very specific problem spots. It was a technology built to prove a concept, not put into everyday use. That’s changing fast. As tools and hardware platforms mature, edge AI is moving out of the lab and into real, always-on production systems.

A big part of this comes down to software. Today’s edge platforms are better at speaking the same language as the rest of the AI ecosystem. They support common frameworks and runtimes, making it easier to move models from development to deployed systems. This compatibility also makes it simpler to update models over time as data or requirements change.

Ease of deployment isn’t the only thing that matters, though. Reliability matters, especially when systems are running around the clock. Keeping inference processing local means an edge AI device doesn’t have to rely on a network connection to do its job. In environments like manufacturing floors or remote monitoring stations, that resilience adds convenience and can even prove to be critical in some scenarios. If connectivity drops, the system can continue to function.

Edge Models Are Becoming Smaller & More Practical

Currently, one of the significant changes in edge AI is a shift toward smaller models. Instead of building the largest network possible, teams are focusing on models that can actually run on constrained hardware. Techniques like quantization and pruning help strip out excess compute and memory use while keeping performance mostly intact.

Those types of optimizations are practical today because models that once required powerful processors and generous memory budgets can now run on hardware that would have been off-limits just a few years ago. This helps close the gap between what works in research environments and what can be deployed on factory floors and in field equipment. Ongoing work in this area continues to show that significant reductions in footprint and compute are possible without sacrificing performance.

As a result, hardware constraints are easing. Edge AI doesn’t have to live exclusively on high-end servers sitting at the edge of the network. Engineers can deploy real-time inference on more modest embedded boards and gateways, often on the same systems already responsible for control, monitoring, and communications. That flexibility makes edge AI more applicable across a wider range of industrial systems.

Reducing Data Movement Is Becoming a Key Driver

Another trend pushing edge AI adoption forward is the cost of moving data around. Sending high-resolution sensor streams or raw machine vision frames back to the cloud is slow, eats up bandwidth, and can get expensive quickly. On a large scale, those costs can outweigh the benefits. This is why more organizations are looking to handle data closer to where it’s generated.

By filtering, summarizing, or classifying data locally, edge AI systems reduce the load on both networks and upstream storage systems. With less dependency on remote infrastructure, edge AI deployments are better equipped to continue processing data through network slowdowns or temporary outages.

The Newest Products for Your Newest Designs

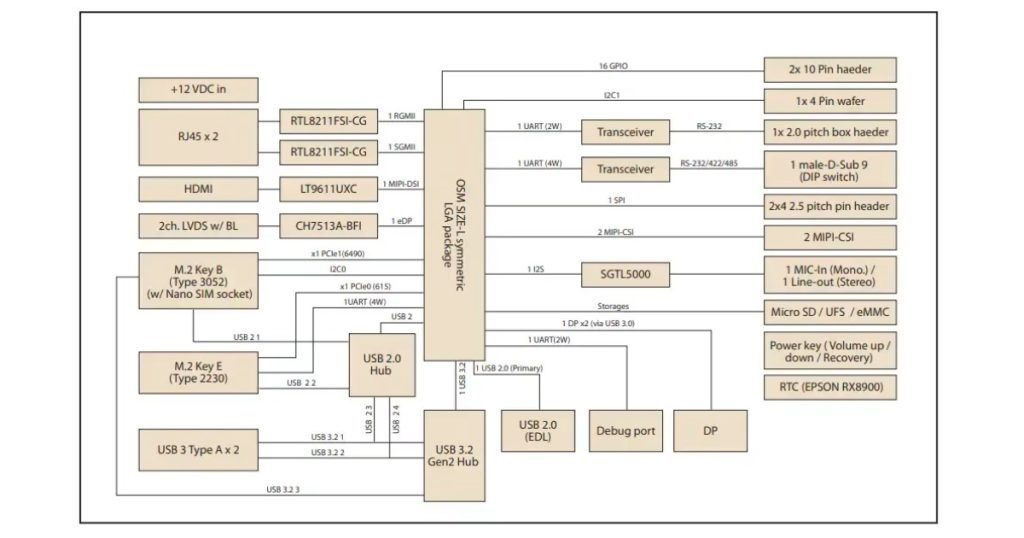

The Advantech AOM-2721 development kit is an embedded computing platform built for evaluating AI at the edge. It’s powered by a Qualcomm QCS6490 system-on-chip (SoC) with up to eight Kryo cores running up to 2.7GHz, an integrated vision processing unit (VPU) and graphics processing unit (GPU), and 8GB of high-speed LPDDR5 memory.

The board includes common industrial interfaces, such as PCIe Gen3, USB 3.2, Gigabit Ethernet, and MIPI-CSI camera inputs, along with HDMI, DisplayPort, and LVDS for displays (Figure 1), making it suitable for embedded and vision-centric AI tasks.

Tuesday’s Takeaway

Edge AI has become a “must-have” requirement for industrial systems that need fast response, predictable behavior, and lower data movement costs. As models get smaller and edge hardware continues to improve, teams can move past proof-of-concept work and deploy into systems meant for production. This technology is also changing how engineers approach development.

Platforms like the Advantech AOM-2721 make it possible to evaluate edge AI workloads earlier in the design process, so that it’s not treated like something to bolt on later. With early integration, teams designing industrial automation systems can go from testing to real-world operation without major redesigns.

For more information on Mouser solutions HERE